Note

Go to the end to download the full example code

Sparse Inversion with Iteratively Re-Weighted Least-Squares#

Least-squares inversion produces smooth models which may not be an accurate representation of the true model. Here we demonstrate the basics of inverting for sparse and/or blocky models. Here, we used the iteratively reweighted least-squares approach. For this tutorial, we focus on the following:

Defining the forward problem

Defining the inverse problem (data misfit, regularization, optimization)

Defining the paramters for the IRLS algorithm

Specifying directives for the inversion

Recovering a set of model parameters which explains the observations

import numpy as np

import matplotlib.pyplot as plt

from discretize import TensorMesh

from SimPEG import (

simulation,

maps,

data_misfit,

directives,

optimization,

regularization,

inverse_problem,

inversion,

)

# sphinx_gallery_thumbnail_number = 3

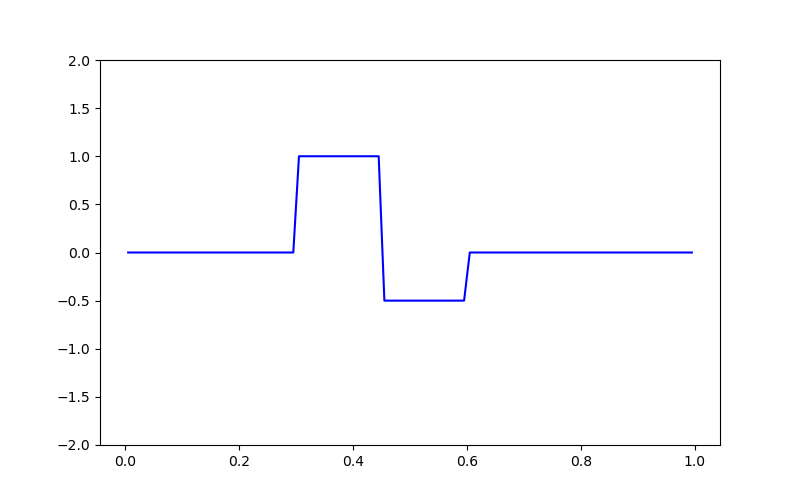

Defining the Model and Mapping#

Here we generate a synthetic model and a mappig which goes from the model space to the row space of our linear operator.

nParam = 100 # Number of model paramters

# A 1D mesh is used to define the row-space of the linear operator.

mesh = TensorMesh([nParam])

# Creating the true model

true_model = np.zeros(mesh.nC)

true_model[mesh.cell_centers_x > 0.3] = 1.0

true_model[mesh.cell_centers_x > 0.45] = -0.5

true_model[mesh.cell_centers_x > 0.6] = 0

# Mapping from the model space to the row space of the linear operator

model_map = maps.IdentityMap(mesh)

# Plotting the true model

fig = plt.figure(figsize=(8, 5))

ax = fig.add_subplot(111)

ax.plot(mesh.cell_centers_x, true_model, "b-")

ax.set_ylim([-2, 2])

(-2.0, 2.0)

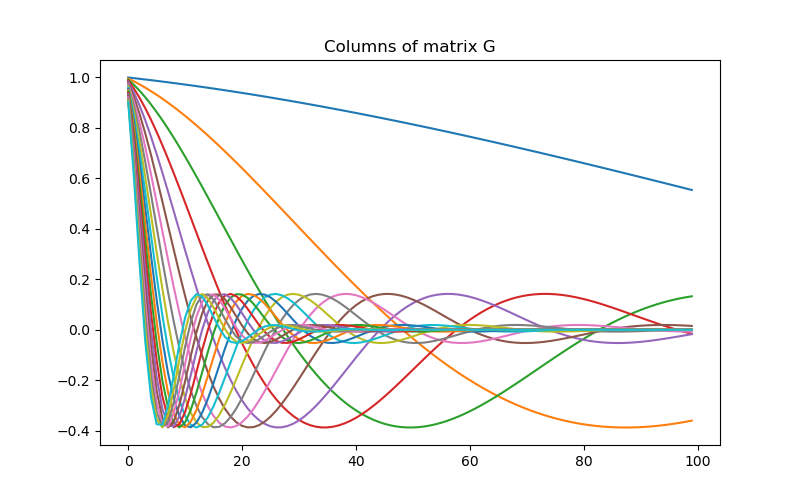

Defining the Linear Operator#

Here we define the linear operator with dimensions (nData, nParam). In practive, you may have a problem-specific linear operator which you would like to construct or load here.

# Number of data observations (rows)

nData = 20

# Create the linear operator for the tutorial. The columns of the linear operator

# represents a set of decaying and oscillating functions.

jk = np.linspace(1.0, 60.0, nData)

p = -0.25

q = 0.25

def g(k):

return np.exp(p * jk[k] * mesh.cell_centers_x) * np.cos(

np.pi * q * jk[k] * mesh.cell_centers_x

)

G = np.empty((nData, nParam))

for i in range(nData):

G[i, :] = g(i)

# Plot the columns of G

fig = plt.figure(figsize=(8, 5))

ax = fig.add_subplot(111)

for i in range(G.shape[0]):

ax.plot(G[i, :])

ax.set_title("Columns of matrix G")

Text(0.5, 1.0, 'Columns of matrix G')

Defining the Simulation#

The simulation defines the relationship between the model parameters and predicted data.

Predict Synthetic Data#

Here, we use the true model to create synthetic data which we will subsequently invert.

# Standard deviation of Gaussian noise being added

std = 0.02

np.random.seed(1)

# Create a SimPEG data object

data_obj = sim.make_synthetic_data(true_model, noise_floor=std, add_noise=True)

Define the Inverse Problem#

The inverse problem is defined by 3 things:

Data Misfit: a measure of how well our recovered model explains the field data

Regularization: constraints placed on the recovered model and a priori information

Optimization: the numerical approach used to solve the inverse problem

# Define the data misfit. Here the data misfit is the L2 norm of the weighted

# residual between the observed data and the data predicted for a given model.

# Within the data misfit, the residual between predicted and observed data are

# normalized by the data's standard deviation.

dmis = data_misfit.L2DataMisfit(simulation=sim, data=data_obj)

# Define the regularization (model objective function). Here, 'p' defines the

# the norm of the smallness term and 'q' defines the norm of the smoothness

# term.

reg = regularization.Sparse(mesh, mapping=model_map)

reg.reference_model = np.zeros(nParam)

p = 0.0

q = 0.0

reg.norms = [p, q]

# Define how the optimization problem is solved.

opt = optimization.ProjectedGNCG(

maxIter=100, lower=-2.0, upper=2.0, maxIterLS=20, maxIterCG=30, tolCG=1e-4

)

# Here we define the inverse problem that is to be solved

inv_prob = inverse_problem.BaseInvProblem(dmis, reg, opt)

Define Inversion Directives#

Here we define any directiveas that are carried out during the inversion. This includes the cooling schedule for the trade-off parameter (beta), stopping criteria for the inversion and saving inversion results at each iteration.

# Add sensitivity weights but don't update at each beta

sensitivity_weights = directives.UpdateSensitivityWeights(every_iteration=False)

# Reach target misfit for L2 solution, then use IRLS until model stops changing.

IRLS = directives.Update_IRLS(max_irls_iterations=40, minGNiter=1, f_min_change=1e-4)

# Defining a starting value for the trade-off parameter (beta) between the data

# misfit and the regularization.

starting_beta = directives.BetaEstimate_ByEig(beta0_ratio=1e0)

# Update the preconditionner

update_Jacobi = directives.UpdatePreconditioner()

# Save output at each iteration

saveDict = directives.SaveOutputEveryIteration(save_txt=False)

# Define the directives as a list

directives_list = [sensitivity_weights, IRLS, starting_beta, update_Jacobi, saveDict]

Setting a Starting Model and Running the Inversion#

To define the inversion object, we need to define the inversion problem and the set of directives. We can then run the inversion.

# Here we combine the inverse problem and the set of directives

inv = inversion.BaseInversion(inv_prob, directives_list)

# Starting model

starting_model = 1e-4 * np.ones(nParam)

# Run inversion

recovered_model = inv.run(starting_model)

SimPEG.InvProblem is setting bfgsH0 to the inverse of the eval2Deriv.

***Done using the default solver Pardiso and no solver_opts.***

model has any nan: 0

=============================== Projected GNCG ===============================

# beta phi_d phi_m f |proj(x-g)-x| LS Comment

-----------------------------------------------------------------------------

x0 has any nan: 0

0 2.27e+06 3.68e+03 1.03e-09 3.68e+03 2.00e+01 0

1 1.13e+06 2.08e+03 2.58e-04 2.38e+03 1.92e+01 0

2 5.66e+05 1.52e+03 6.19e-04 1.87e+03 1.90e+01 0 Skip BFGS

3 2.83e+05 9.67e+02 1.32e-03 1.34e+03 1.83e+01 0 Skip BFGS

4 1.42e+05 5.30e+02 2.41e-03 8.71e+02 1.72e+01 0 Skip BFGS

5 7.08e+04 2.50e+02 3.79e-03 5.18e+02 1.41e+01 0 Skip BFGS

6 3.54e+04 1.03e+02 5.22e-03 2.88e+02 1.19e+01 0 Skip BFGS

7 1.77e+04 3.97e+01 6.45e-03 1.54e+02 9.97e+00 0 Skip BFGS

Reached starting chifact with l2-norm regularization: Start IRLS steps...

irls_threshold 1.2285608123285758

8 8.85e+03 1.64e+01 1.01e-02 1.06e+02 4.83e+00 0 Skip BFGS

9 1.54e+04 1.35e+01 1.17e-02 1.94e+02 1.74e+01 0

10 1.08e+04 3.05e+01 1.14e-02 1.54e+02 5.42e+00 0

11 7.99e+03 2.76e+01 1.25e-02 1.27e+02 5.51e+00 0

12 6.23e+03 2.47e+01 1.31e-02 1.06e+02 5.15e+00 0 Skip BFGS

13 6.23e+03 2.20e+01 1.32e-02 1.04e+02 8.91e+00 0 Skip BFGS

14 5.08e+03 2.27e+01 1.26e-02 8.68e+01 5.17e+00 0

15 5.08e+03 1.96e+01 1.20e-02 8.05e+01 7.36e+00 0

16 5.08e+03 1.87e+01 1.10e-02 7.44e+01 7.90e+00 0

17 7.92e+03 1.79e+01 9.90e-03 9.63e+01 1.53e+01 0

18 6.32e+03 2.36e+01 8.50e-03 7.74e+01 7.40e+00 0

19 6.32e+03 2.08e+01 7.65e-03 6.92e+01 1.05e+01 0

20 6.32e+03 1.93e+01 6.62e-03 6.12e+01 1.08e+01 0

21 9.93e+03 1.75e+01 5.61e-03 7.33e+01 1.47e+01 0

22 9.93e+03 2.04e+01 4.49e-03 6.50e+01 1.20e+01 0

23 9.93e+03 1.89e+01 3.85e-03 5.72e+01 1.18e+01 0

24 1.58e+04 1.69e+01 3.27e-03 6.86e+01 1.58e+01 0

25 1.58e+04 1.98e+01 2.59e-03 6.07e+01 1.19e+01 0

26 1.58e+04 1.90e+01 2.28e-03 5.51e+01 1.25e+01 0 Skip BFGS

27 1.58e+04 1.80e+01 2.03e-03 5.00e+01 1.29e+01 0

28 2.52e+04 1.68e+01 1.78e-03 6.16e+01 1.68e+01 0

29 2.52e+04 1.86e+01 1.40e-03 5.39e+01 1.18e+01 0

30 2.52e+04 1.81e+01 1.16e-03 4.73e+01 1.17e+01 0 Skip BFGS

31 3.98e+04 1.73e+01 9.65e-04 5.57e+01 1.69e+01 0

32 6.19e+04 1.80e+01 7.85e-04 6.65e+01 1.70e+01 0

33 6.19e+04 1.99e+01 5.82e-04 5.59e+01 1.10e+01 0

34 6.19e+04 1.88e+01 4.92e-04 4.93e+01 1.23e+01 0

35 9.73e+04 1.75e+01 4.28e-04 5.91e+01 1.69e+01 0

36 9.73e+04 1.82e+01 3.40e-04 5.13e+01 1.16e+01 0

37 1.54e+05 1.70e+01 2.80e-04 6.03e+01 1.72e+01 0

38 2.42e+05 1.77e+01 2.22e-04 7.13e+01 1.70e+01 0

39 2.42e+05 1.88e+01 1.83e-04 6.30e+01 1.16e+01 0

40 2.42e+05 1.81e+01 1.54e-04 5.52e+01 1.17e+01 0

41 3.84e+05 1.70e+01 1.30e-04 6.69e+01 1.74e+01 0

42 6.00e+05 1.78e+01 1.08e-04 8.24e+01 1.71e+01 0

43 6.00e+05 1.94e+01 8.86e-05 7.25e+01 1.18e+01 0

44 6.00e+05 1.88e+01 7.42e-05 6.33e+01 1.16e+01 0

45 9.38e+05 1.77e+01 6.26e-05 7.64e+01 1.69e+01 0

46 9.38e+05 1.88e+01 5.09e-05 6.65e+01 1.14e+01 0

47 9.38e+05 1.81e+01 4.19e-05 5.74e+01 1.16e+01 0

Reach maximum number of IRLS cycles: 40

------------------------- STOP! -------------------------

1 : |fc-fOld| = 0.0000e+00 <= tolF*(1+|f0|) = 3.6816e+02

0 : |xc-x_last| = 1.1286e-01 <= tolX*(1+|x0|) = 1.0010e-01

0 : |proj(x-g)-x| = 1.1565e+01 <= tolG = 1.0000e-01

0 : |proj(x-g)-x| = 1.1565e+01 <= 1e3*eps = 1.0000e-02

0 : maxIter = 100 <= iter = 48

------------------------- DONE! -------------------------

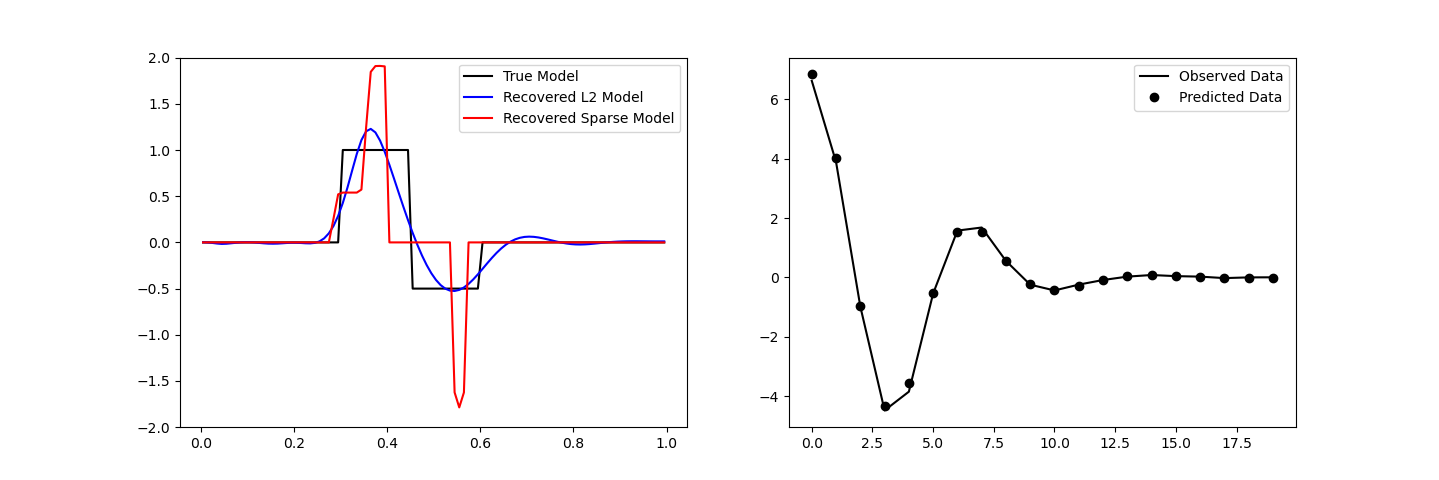

Plotting Results#

fig, ax = plt.subplots(1, 2, figsize=(12 * 1.2, 4 * 1.2))

# True versus recovered model

ax[0].plot(mesh.cell_centers_x, true_model, "k-")

ax[0].plot(mesh.cell_centers_x, inv_prob.l2model, "b-")

ax[0].plot(mesh.cell_centers_x, recovered_model, "r-")

ax[0].legend(("True Model", "Recovered L2 Model", "Recovered Sparse Model"))

ax[0].set_ylim([-2, 2])

# Observed versus predicted data

ax[1].plot(data_obj.dobs, "k-")

ax[1].plot(inv_prob.dpred, "ko")

ax[1].legend(("Observed Data", "Predicted Data"))

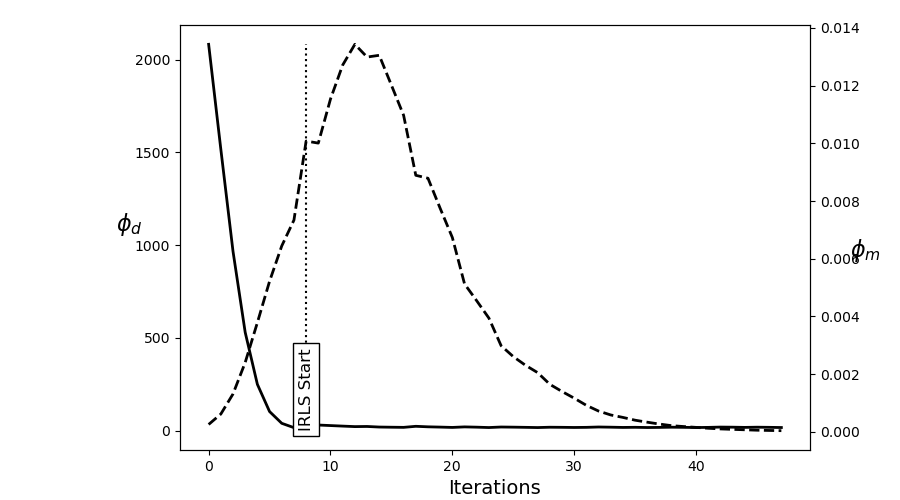

# Plot convergence

fig = plt.figure(figsize=(9, 5))

ax = fig.add_axes([0.2, 0.1, 0.7, 0.85])

ax.plot(saveDict.phi_d, "k", lw=2)

twin = ax.twinx()

twin.plot(saveDict.phi_m, "k--", lw=2)

ax.plot(np.r_[IRLS.iterStart, IRLS.iterStart], np.r_[0, np.max(saveDict.phi_d)], "k:")

ax.text(

IRLS.iterStart,

0.0,

"IRLS Start",

va="bottom",

ha="center",

rotation="vertical",

size=12,

bbox={"facecolor": "white"},

)

ax.set_ylabel(r"$\phi_d$", size=16, rotation=0)

ax.set_xlabel("Iterations", size=14)

twin.set_ylabel(r"$\phi_m$", size=16, rotation=0)

Text(865.1527777777777, 0.5, '$\\phi_m$')

Total running time of the script: (0 minutes 21.778 seconds)

Estimated memory usage: 8 MB